Introduction to the art and science of testing in Data Science

2 years ago

It’s been over 20 years since Test Driven Development (TDD) was introduced to the software development community. Since that time, TDD has become a standard best practice and, more importantly, it has saved countless hours for those who use it. I don’t think I’ve ever met a professional software developer who has never played with TDD.

And then there are researchers. People in research and in the broadly defined area of data science often lack formal software development training. We often focus on the data, on the results, we want to iterate quickly and change our code without having to deal with testing things all the time. When someone introduces us to TDD, we tend to discard the idea as impractical. And some of us even discard testing altogether. However, I think that this is a prime example of throwing the baby out with the bathwater.

What’s wrong with TDD in a research context?

Most research projects differ greatly from standard backend development. In addition, every software project has its own, unique characteristics. For example, goals are often defined as: ‘find the limits of what can be done’. By definition, when you’re doing research, you don’t already know the answer to the problem. And, when you don't know the 'correct' answer in advance, testing what the correct output looks like is a tricky endeavor. But, it's not impossible, as I will try to demonstrate further on.

Another point that is often raised is that TDD is too rigid for a normal data science workflow. Often, the point of research is to manipulate some formulae inside a process and to see what comes out via experimentation. This manipulation might be as simple as tweaking a hyper-parameter or it might require rewriting huge chunks of a system. Writing tests for something that may be deleted tomorrow can therefore be a waste of time. In my experience, many researchers would replace “may be” for “will” in the previous sentence.

Personal motivation

I’m guilty of committing two kinds of mistakes in the testing area:

(1) not enough testing; and (2) trying to force TDD into places where it doesn’t belong. When you don’t test enough, bad things are a matter of ‘when’ rather than ‘if’.

Even the greats make these types of mistakes, as in the following example where the Data Scientist tested too little:

(Source: https://the-turing-way.netlify.app)

On the other hand, introducing too much testing for the sake of it can lead to slow iteration speed and depressed morale in the team (because, if researchers liked carrying out testing, then they would follow a career in testing).

With that in mind I think it’s fair to say that the only answer to the conundrum of whether or not to test in a research context is that, well, it depends! By the end of this section I hope that I manage to convince you that testing during research is an art of balancing contradicting needs (speed and agility vs quality assurance). And this article could perhaps end at that point. But we’re Data Scientists, not artists, so it seems only natural to explore further the variables that govern the world of testing.

Guidelines

As stated in the introduction, there are many questions to which I cannot give definitive answers. But I can quote Warren Buffet, one of the best investors of all time, who said, "It’s better to be roughly right, than precisely wrong".

For me, this means that some rules-of-thumb and approximations can still be of enormous value, even if they do not provide exact answers. Here are some guidelines related to testing that I try to follow when writing research code:

1. Matplotlib visualization cells in a Jupyter notebook, print() statements and absolutely anything that is obvious (outside the critical zone) probably do not require much commenting or testing. You will most likely see immediately that your plot is wrong if you messed up the axes.

2. For easy tensor manipulations I tend to write comments describing their dimensions as in the examples below:

imgs = load_n_imgs(batch_size) # list of [num_channels, width, height]

batch = np.stack(imgs) # batch_size, num_channels, width, height

3. Split the code in Jupyter notebooks into logical blocks. If this is a one-off or a presentation, provide markdown comments - a 2:1 ratio of markdown description to code is reasonable. On the other hand, if this code cell is going to be reused, convert it to one or more functions.

4. Each function should have a type-annotated signature

5. Functions with complex signatures (many parameters or return values) should have Docstrings

6. When debugging a cell in a Jupyter notebook with a print(...) statement, try to convert it to an assert statement. These are usually asserts on a variable value or shape. For example, assert img.shape[0] == 3.

7. Functions that perform any complex logic should have some form of unit tests. This can be a notebook cell with a bunch of asserts on function calls, for example, assert fibonacci(10) == 55 or proper unit tests using pytest.

8. Most Jupyter notebooks should be converted to normal python scripts. I write more on that topic in the discussion below. Exceptions to this include guides, presentations, and one-off exploratory research.

9. Critical parts require serious unit testing or, even better, TDD. This even applies to the seemingly obvious parts! For example, the generation of anchor boxes in object detection models should be thoroughly tested. If you mess this up, no network architecture and no amount of data will correct your mistake. I’ve learned this lesson the painful way, so please be smarter than I was in this case.

10. Above all else, apply reason. Remember that you’re trying to find the right balance, and then take decisions that fit your specific case.

Test evolution

Contrary to classical software development, where code is written with deployment in mind, in a research setting the code has a more complex lifecycle. A one-off idea can lead to a dead end or to a breakthrough that survives long enough in the world of research to be applied later in the real world. So remember to adjust your testing strategy accordingly. I invite you to think of developing tests as a process that accompanies code as the code matures and comes closer to production. If something is being reused more and more, come back to it and put some effort into testing it more thoroughly.

Test tricks

Overfitting

Isn’t that something we’re trying to avoid? Normally, yes. But we can use that property to our advantage when testing a pipeline. Before running training that costs you a lot of time and electricity, you can create a dummy dataset with one (or a very limited number of) examples. If everything is working correctly, you should be able to see the model converge very quickly, overfitting to the single sample and becoming almost perfect in predicting it.

If you’re using pytorch-lightning (a library that I highly recommend, and a topic for another blog post), checkout the overfit-batches flag.

Trend tests

I first saw this idea here. It’s a very simple yet powerful concept.

Machine learning code is rarely as deterministic and as easily calculable as Fibonacci. I for one don’t remember the correct answer to the exact value of softmax([1,2,8]) so I can’t simply write assert softmax([1,2,8]) == correct_answer. In trend testing, we test the properties of the function. For example, we can write:

result = softmax([1,2,8])

assert result[1] < result[2]

assert result.sum() == 1

You can check for all kinds of properties, so be creative!

Pytorch’s named tensors

This is a very useful trick, but be warned that the API is unstable. This essentially allows you to name dimensions so that you never confuse the batch axis with the channel axis ever again.

Misc tools

You really, really, should know about pytest, pre-commit, CI, linters, formatters, loggers and data versioning.

Jupyter notebook vs python scripts

Don’t get me wrong. I adore Jupyter notebooks. They’re an amazing tool that I frequently use. Their flexibility is a great strength, but also, from the reproducibility perspective, their biggest flaw. It is possible to run the cells in any order and that might be a nightmare for the person inheriting your code. Unless you remember each time to reset the kernel, run everything from the beginning, check the outputs before committing the file, then you’re likely to run into errors the next time that you try to run the code. How do you enforce such checkups across the whole team for a period of weeks? You might be lucky and have a very self-disciplined team, or you must just accept that you simply can’t enforce this requirement.

.ipynb -> .py conversion

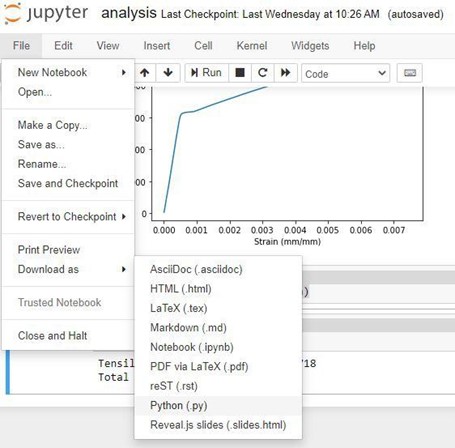

The simple solution is to convert all notebooks to python scripts. You can do that straight from the Jupyter File menu:

(picture courtesy of https://pythonforundergradengineers.com)

In this way, notebooks become scripts and suddenly gain magic properties. They now: have a name, which hopefully explains what they do; can only be run in a sequential manner; are easy to handle via git because the changes to a file are clearly visible; and, after some re-structuring, lend themselves to automated testing.

So a good rule of thumb is that, unless you’re trying to explain something like you would to a five-year old, leading someone through your code and results with at least twice as many markdown comments as are really required, then convert all notebooks to python files.

This also requires you to think for a moment about how to name the file. And people will also thank you if you take five minutes to write three Docstring sentences that describe how to run the file. And maybe you could refactor some cells into functions. And give a moment's thought to both if name == " main ": and def main(). Oh, and then switch from passing parameters via variables in the beginning of the script to using argparse. Or even something like hydra. And then test thoroughly…

With all that done, whoever uses your code a month later from now certainly owes you a cup of coffee :)

Conclusions

Above all else, think about the consequences of making or not making the above changes! Apply whatever amount of testing is reasonable, remembering that it’s a delicate balance.

Literature

There is a lot of literature on testing, but very few sources focus on testing research applications. I chose this topic because I couldn’t find a good practical guide to testing in the ML environment. Here are some resources that I looked into, where the list below is in no particular order:

- https://goodresearch.dev/

- https://pythonforundergradengineers.com/writing-tests-for-scientific- code.html

- https://the-turing-way.netlify.app/reproducible-research/testing.html

- https://bitbloom.tech/news/pragmatic-unit-testing-for-scientific-codes

- https://pythonforundergradengineers.com/writing-tests-for-scientific- code.html

- https://best-practice-and-impact.github.io/qa-of-code-guidance/testing_code.html

- https://www.youtubcom/watch?v=0ysyWk-ox-8

- https://scicomp.stackexchange.com/questions/206/is-it-worthwhile-to- write-unit-tests-for-scientific-research-codes

Thanks for staying with me to the end of the article!